We upgraded 15 Timefold Solver quickstarts to 2.0 in under 10 minutes using an OpenRewrite recipe. Here’s exactly what that migration looked like.

Discover the techniques Timefold Engineers deploy to make your Python code faster.

Subscribe to Planned, planning education for real-world stuff.

Performance is a top priority for Timefold Solver, since any performance gains means we can find the same solution in a less amount of time (or alternatively, a better solution in the same amount of time). This is also true for Python, which is typically one or two orders of magnitudes slower than Java. Through a variety of techniques, we are able to make Python reach 25% of Java’s throughput. Read on to find out the methods we deploy to make Python’s performance better, and see how Timefold Solver for Python performance compares to Java on a variety of PlanningAI models.

Note: Usage of Python in this article refers exclusively to the CPython implementation.

Python’s performance challenges are mostly due to design decisions:

The Global Interpreter Lock, or GIL for short, is a lock that prevents multiple threads from executing Python code at the same time. This is because Python’s core data structures, such as dict, list and object are not thread-safe.

When the GIL was introduced (Python 1.5, in the year 1998), multi-threaded programs were rare (most computers only supported one thread at the time), and as such, using a GIL simplifies Python extension developer experience and does not degrade single-threaded performance. The GIL was a good idea for its time, but gradually became worse as multi-threaded programs and computers became more common. Currently, Python is working on removing the GIL, but it will be several releases before it is widely available.

Python is dynamically typed, which means the result type of operations cannot be determined before their execution. Moreover, many operations such as addition and attribute lookup are also dynamic, since they look up attributes on their operands' types. As a result, this seemly simple python code:

def add(x, y):

return x + yDoes something a lot more complex underneath:

def ignore_type_error_if_builtin_type(arg_type, method, x, y):

try:

return method(x, y)

except TypeError:

if arg_type in [int, str, list, ...]:

return NotImplemented

raise

def add(x, y):

x_type = type(x)

x_add = x_type.__dict__.get('__add__')

y_type = type(y)

y_radd = y_type.__dict__.get('__radd__')

if x_add is None and y_radd is None:

raise TypeError('...')

elif y_radd is None:

out = ignore_type_error_if_builtin_type(x_type, x_add, x, y)

if out is NotImplemented:

raise TypeError('...')

return out

elif x_add is None:

out = ignore_type_error_if_builtin_type(y_type, y_radd, y, x)

if out is NotImplemented:

raise TypeError('...')

return out

else:

if x_type != y_type and issubclass(y_type, x_type):

out = ignore_type_error_if_builtin_type(y_type, y_radd, y, x)

if out is NotImplemented:

out = ignore_type_error_if_builtin_type(x_type, x_add, x, y)

if out is NotImplemented:

raise TypeError('...')

else:

out = ignore_type_error_if_builtin_type(x_type, x_add, x, y)

if out is NotImplemented:

out = ignore_type_error_if_builtin_type(y_type, y_radd, y, x)

if out is NotImplemented:

raise TypeError('...')

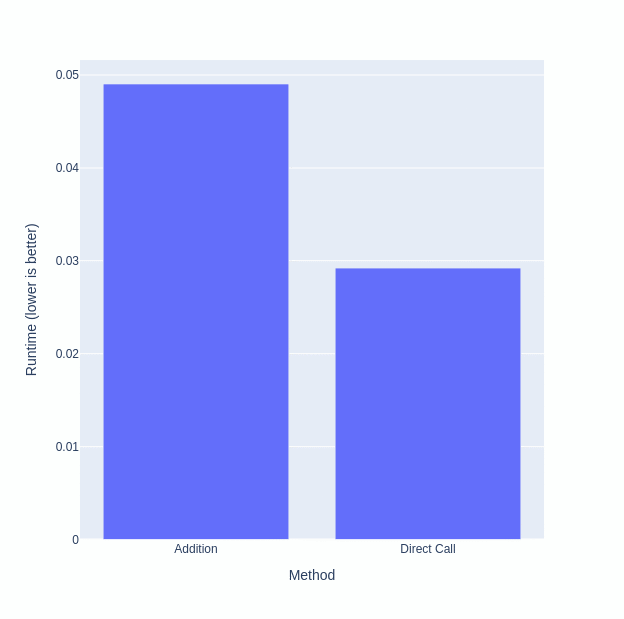

return outFor custom types, this complexity causes addition to have about double the overhead of a direct method call:

import timeit

source = \

'''

class A:

def __init__(self, value):

self.value = value

def __add__(self, other):

return self.value + other.value

a = A(0)

b = A(1)

'''

timeit.timeit('a + b', source)

# 0.04903000299964333

timeit.timeit('add(a, b)', f'{source}\nadd=A.__add__')

# 0.029219600999567774

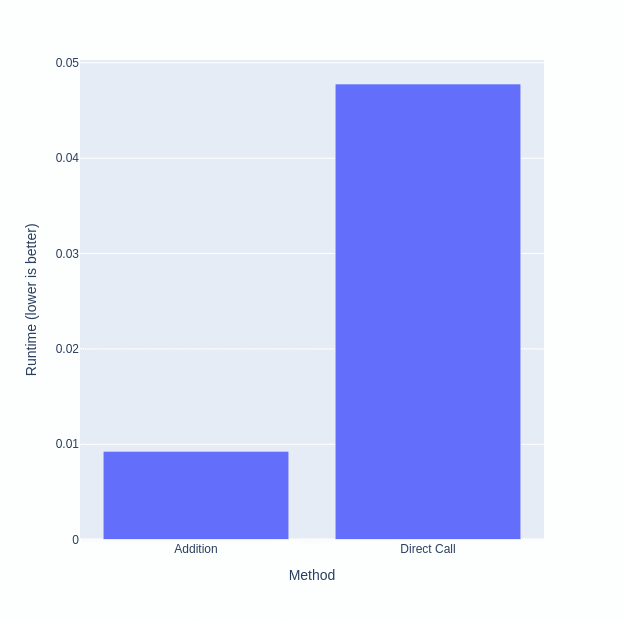

However, Python specializing adaptive interpreter speeds up the code for builtin types, such as int.

import timeit

timeit.timeit('a + b', 'a=0\nb=1')

# 0.009246169998732512

timeit.timeit('add(a, b)', 'a=0\nb=1\nadd=int.__add__')

# 0.04777865600044606

Python supports monkey-patching; that is, the behavior of types and functions can be modified at runtime. For example, you can change the implementation of a type’s method:

class Dog:

def talk(self):

return 'Woof'

a = Dog()

print(a.talk()) # Prints 'Woof'

Dog.talk = lambda self: 'Meow'

print(a.talk()) # Prints 'Meow'Monkey-patching prevents method inlining, an optimization technique where a method is copied into its call sites, removing call overhead and allowing further optimization to be done.

Python currently lacks a JIT compiler, meaning it cannot optimize code based on runtime characteristics (such as how often a branch is taken). This will probably change in the future, as a experimental JIT compiler for Python is currently being worked on.

However, all the above is insignificant to what was our biggest performance obstacle…

A proxy is a class that acts as a facade for arbitrary classes. In order for Java to call a Python method (or vice versa), a Foreign Function Interface must be used. A Foreign Function Interface, or FFI in short, is an interface that allows one program language to call functions of another. In Java, there are currently two different FFI implementations:

JNI and the foreign API both returns a raw pointer (in JNI, a long, and in foreign, a MemorySegment) for Python objects. However, a pointer has little use in Java code, which expects interfaces like Comparable or Function. Typically, proxies are used to convert the pointer into Comparable, Function, or some other interface.

If a proxy is empty, it is only about 1.63 slower than a direct call. However, proxies are often not empty. Often, they need to do complex tasks such as converting the arguments and results of methods. How slow are we talking? Consider this simplistic benchmark:

public class Benchmark {

public static void benchmark(Runnable runner) {

var start = System.nanoTime();

for (int i = 0; i < 10_000; i++) {

runner.run();

}

var end = System.nanoTime();

System.out.printf("%.9f\n", (end - start) / 1_000_000_000d);

}

public static void main(String[] args) {

System.out.println("Cold");

benchmark(() -> {});

System.out.println("Hot");

benchmark(() -> {});

}

}Which we can invoke via JPype

import jpype

jpype.startJVM(classpath=['.'])

benchmark = jpype.JClass('Benchmark')

@jpype.JImplements('java.lang.Runnable')

class Runner:

@jpype.JOverride

def run(self):

pass

print("Cold")

benchmark.benchmark(Runner())

print("Hot")

benchmark.benchmark(Runner())or GraalPy:

import java.Benchmark

def run():

pass

print("Cold")

java.Benchmark.benchmark(run)

print("Hot")

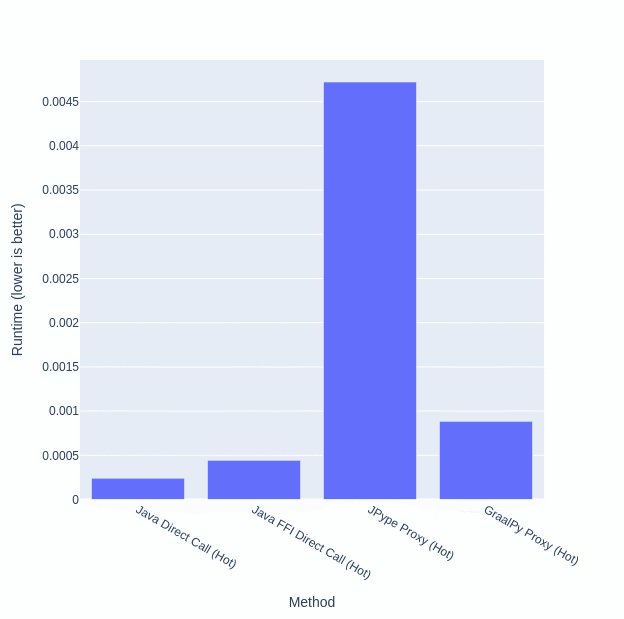

java.Benchmark.benchmark(run)Here are the runtimes for running benchmark with an empty Runnable in Java versus an empty Runnable implemented as a Python proxy:

| Method | Runtime |

|---|---|

| Java Direct Call (Cold) | 0.000232610 |

| Java Direct Call (Hot) | 0.000240525 |

| Java FFI Direct Call (Cold) | 0.005904000 |

| Java FFI Direct Call (Hot) | 0.000442477 |

| JPype Proxy (Cold) | 0.006906666 |

| JPype Proxy (Hot) | 0.004721682 |

| GraalPy Proxy (Cold) | 0.027417475 |

| GraalPy Proxy (Hot) | 0.000883732 |

Despite the Python code literally doing nothing, a JPype proxy is 967.102% slower than a direct Python call when hot. GraalPy fares better at only 100% slower than a direct Python call. Things get even worse when there are arguments and return types involved (for example, with java.util.function.Predicate). Here, we pass a Python list to Java (which calls a Python proxy function on each item in a list).

import jpype

jpype.startJVM(classpath=['.'])

benchmark = jpype.JClass('Benchmark')

class Item:

def __init__(self, value):

self.value = value

@jpype.JImplements('java.util.function.Predicate')

class Runner:

@jpype.JOverride

def test(self, item):

return item.value

def proxy(item):

return jpype.JProxy(jpype.JClass('java.io.Serializable'), inst=item, convert=True)

arraylist = jpype.JClass('java.util.ArrayList')

items = arraylist([proxy(Item(i % 2 == 0)) for i in range(10000)])

print('Cold')

benchmark.benchmark(Runner(), items)

print('Hot')

benchmark.benchmark(Runner(), items)or GraalPy:

import java.Benchmark

class Item:

def __init__(self, value):

self.value = value

def test(item):

return item.value

items = [Item(i % 2 == 0) for i in range(10000)]

print("Cold")

java.Benchmark.benchmark(test, items)

print("Hot")

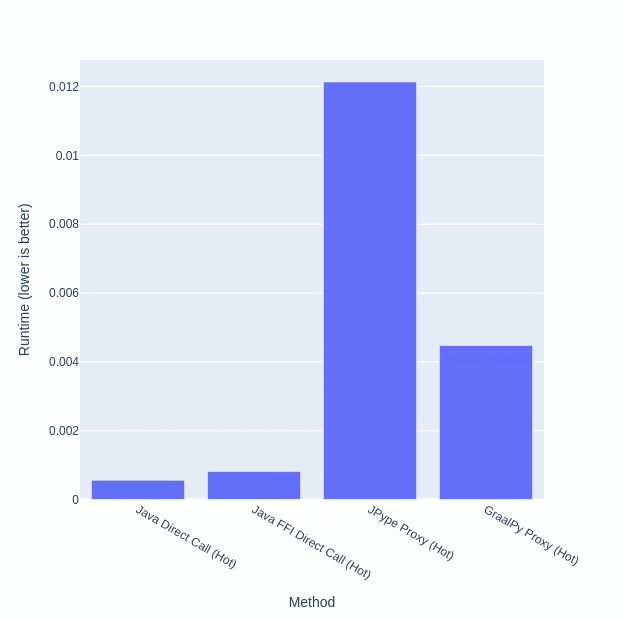

java.Benchmark.benchmark(test, items)| Method | Runtime |

|---|---|

| Java Direct Call (Cold) | 0.000819039 |

| Java Direct Call (Hot) | 0.000559228 |

| Java FFI Direct Call (Cold) | 0.004265137 |

| Java FFI Direct Call (Hot) | 0.000807377 |

| JPype Proxy (Cold) | 0.015507570 |

| JPype Proxy (Hot) | 0.012130092 |

| GraalPy Proxy (Cold) | 0.070494332 |

| GraalPy Proxy (Hot) | 0.004472111 |

Here, JPype is 1402.41% slower than calling Python directly when hot! GraalPy also degraded, becoming 453.906% slower than calling Python directly.

In particular, when the arguments are passed to Java, they are converted to Java proxies. However, when the arguments are passed back to Python, they are not converted back to Python objects. Instead, a Python proxy is created for the Java proxy, creating additional overhead for every single operation.

Given the problems with proxies, the obvious solution is to just manage the opaque Python pointers and do direct Python calls. Unfortunately, using FFI directly is hard:

So how can we avoid the performance losses from:

with a single solution? The (not so simple) answer is to translate Python’s bytecode and objects to Java bytecode and objects.

However, there is a not so simple part:

Annoying as these problems are, they are significantly easier to debug and test than using FFI directly; we can rely on JPype’s deep magic to keep Java’s and Python’s garbage collectors in sync, and we can have deterministic reproducers and test cases.

Is it truly worth all the hassle of translating Python’s bytecode? Well, the only way to know is to measure the speed before and after the bytecode translation. Bytecode translation was originally implemented in OptaPy (which Timefold Solver for Python is a fork of), which included an option to use the proxies instead. Since the bytecode generation became more mature, the option to use proxies was removed from Timefold Solver for Python.

Thus, to compare the performance of proxies versus translated bytecode, we will use the latest (and probably last) release OptaPy (9.37.0b0) on Python 3.10.

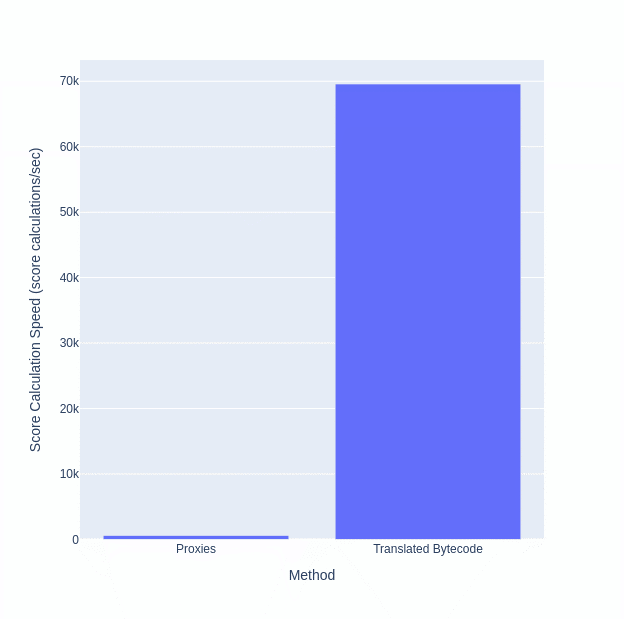

Here are the score calculation speeds of using proxies versus translated bytecode in OptaPy’s school timetabling quickstart (higher is better).

Note: OptaPy’s School Timetabling quickstart is not equivalent to Timefold’s School Timetabling quickstart: there are bugs in the constraints that are fixed in the Timefold versions but were never addressed in OptaPy’s version.

| Method | Score Calculation Speed |

|---|---|

| Proxies | 599/sec |

| Translated Bytecode | 69558/sec |

In short, using translated bytecode multiplied our throughput by 100 (i.e. an over 100,000% performance increase)!

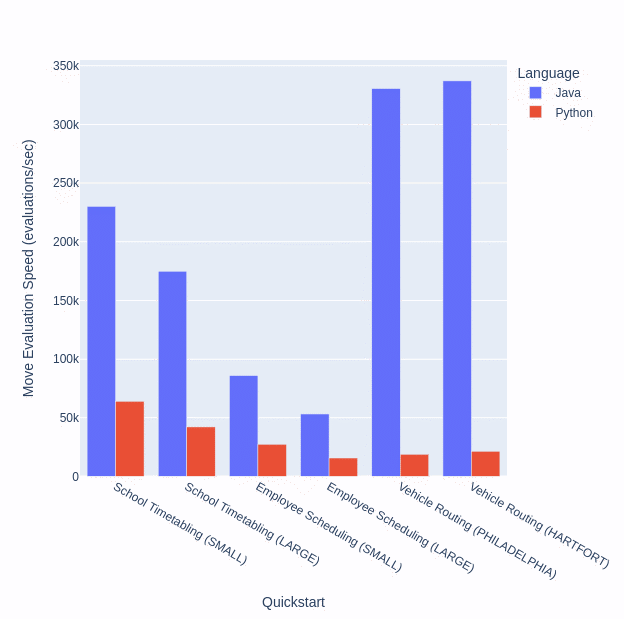

Now finally to the topic of the blog post: how fast is Timefold Solver for Python on various PlanningAI models? Below are the move evaluation speeds of the Java and Python Timefold 1.15.0 quickstarts:

| Quickstart | Dataset | Language | Move Evaluation Speed |

|---|---|---|---|

| School Timetabling | SMALL | Java 22.0.1 | 230143/sec |

| School Timetabling | SMALL | Python 3.12 | 64034/sec |

| School Timetabling | LARGE | Java 22.0.1 | 174881/sec |

| School Timetabling | LARGE | Python 3.12 | 42249/sec |

| Employee Scheduling | SMALL | Java 22.0.1 | 85926/sec |

| Employee Scheduling | SMALL | Python 3.12 | 27462/sec |

| Employee Scheduling | LARGE | Java 22.0.1 | 53265/sec |

| Employee Scheduling | LARGE | Python 3.12 | 15753/sec |

| Vehicle Routing | PHILADELPHIA | Java 22.0.1 | 330492/sec |

| Vehicle Routing | PHILADELPHIA | Python 3.12 | 18912/sec |

| Vehicle Routing | HARTFORT | Java 22.0.1 | 337094/sec |

| Vehicle Routing | HARTFORT | Python 3.12 | 21443/sec |

In short:

If the Python bytecode is translated to Java bytecode, why is it still significantly slower than Java? There are several differences between the translated bytecode and normal Java bytecode:

BigInteger.In many cases, this performance loss is acceptable, as it allows you to write your code in pure Python instead of Java, meaning:

Note: Python libraries might use functions defined in C, which naturally have no corresponding Python bytecode, and thus cannot be translated to Java bytecode.

Usage of C‑defined functions in constraints causes a necessary fallback to Python, and the Java objects need to be converted back to Python objects. This causes a massively performance degradation that has roughly the same performance as proxies.

C‑defined functions can be used outside of constraints without issue.

To make Timefold’s goal of making PlanningAI accessible to everyone a reality, everyone should be able to write in the language of their choice. For many, that language is Python.

To make writing PlanningAI models in Python practical, the Python solver must match a reasonable fraction of the Java Solver’s performance. This is a challenge, as Python provides a myriad of obstacles to performance. But like any obstacle, they can be overcome.

By overcoming these obstacles, we managed to make the Python solver match 25% of the Java solver’s performance, making writing PlanningAI models in Python practical. That being said, for use cases that truly require the most performant code, it is probably best to stick to Java.

We upgraded 15 Timefold Solver quickstarts to 2.0 in under 10 minutes using an OpenRewrite recipe. Here’s exactly what that migration looked like.